The request was fairly simple — iterate through some data and update some dates. Something as simple as this.

for entry in db.query():

entry.date = entry.date + timedelta(days=10)

entry.put()

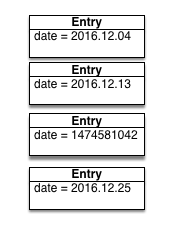

Unfortunately, the NoSQL database we were using did not support schemas and, unbeknownst to me, some data had been changed in unexpected ways on production. Something as simple as this.

My code seemed correct. Unit tests passed. Code review passed. It ran flawlessly on the test environment. QA signed off on the change. So we deployed it. And things broke.

Most calls to entry.date returned a date object that could correctly

add with a timedelta. But occasional outliers would return an int

representing a Unix timestamp.

TypeError: unsupported operand type(s) for +: 'int' and 'datetime.timedelta'

I didn’t anticipate that data in production was different than data anywhere else. I didn’t anticipate that whoever changed some dates to integer values didn’t update any unit tests. How could I?

Tests are great for errors you can anticipate. Tests are great for preventing regressions. Tests are great for verifying functionality. But tests are not enough. Tests are never enough. Tests are not production

Any single software deployment has some likelihood of breaking things. The goal of (safe) software development is to minimize that likelihood. So, you need your tests to pass to make sure you covered any anticipated problems and that you did not make any regressions. But you also need to verify that you won’t break production. How?

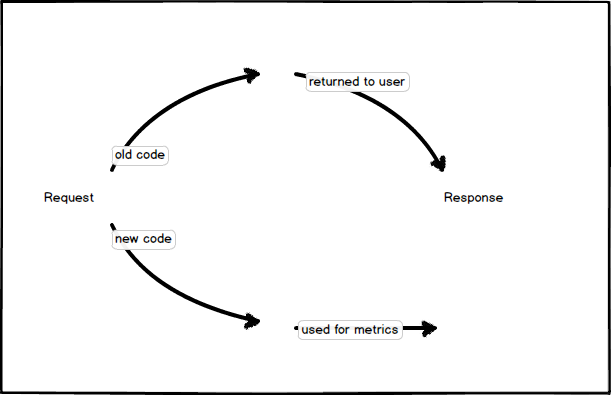

Parallel Change allows you to deploy a change to production and measure its effect, without affecting current behaviour.

As a concrete example, imagine deploying a change to the billing system — a system where mistakes have measurable dollar effects. When a request comes in, you route it to both the old and new code, measuring – in production – the error rate for each.

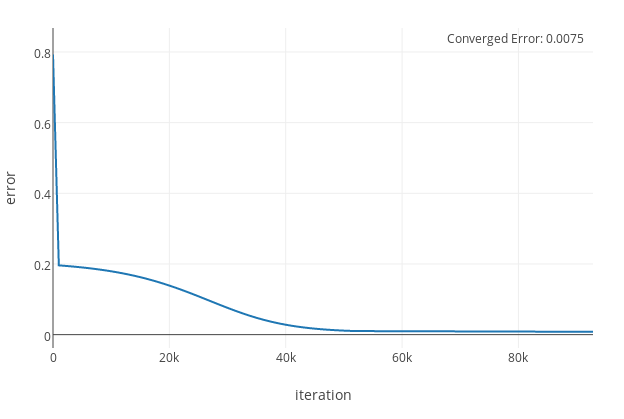

Once the error rate moves below an acceptable threshold, you can remove the parallel change path and route all requests to the new code, comfortable in the knowledge that is is performing as expected on production.

As a developer, what would you be more comfortable with? Deploying a change that passed QA, or deploying a change that has been measured on production data? If your job was on the line, what would you do? Tests are never enough. Use data.